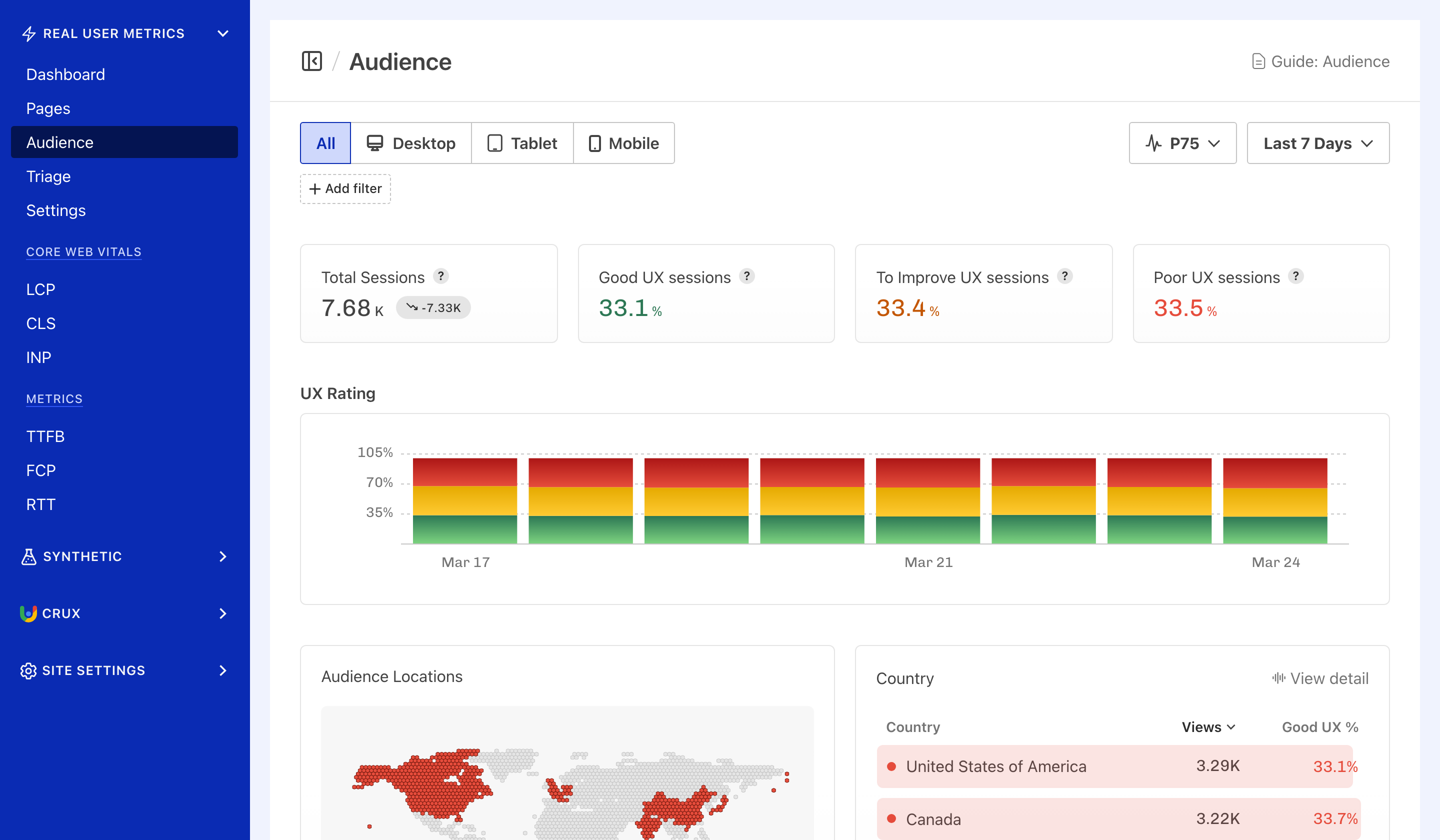

UX Rating evaluates what percentage of real user experiences are Good, Need Improvement, or Poor as a percentage.

A higher "Good" percentage means more of your visitors are having a fast, stable experience.

How is UX Rating calculated?#

Each real user session reports a rating per metric — good, needs improvement, or poor — based on Google's Core Web Vitals thresholds:

| Metric | Good | Needs Improvement | Poor |

|---|---|---|---|

| LCP | < 2.5s | 2.5–4.0s | > 4.0s |

| CLS | < 0.1 | 0.1–0.25 | > 0.25 |

| INP | < 200ms | 200–500ms | > 500ms |

| TTFB | < 800ms | 800–1800ms | > 1800ms |

The system pools all individual metric ratings across all sessions and calculates what percentage fall into each bucket:

- Good UX — the percentage of all metric ratings classified as "good"

- To Improve UX — the percentage classified as "needs improvement"

- Poor UX — the percentage classified as "poor"

UX Rating is not a per-session pass/fail. It’s an aggregate across all four metrics and all sessions. For example, if you had 1,000 sessions and each reported 4 metrics, that's 4,000 individual ratings. If 3,200 of those were "good", the Good UX percentage would be 80%.

Missing metrics are excluded, not penalised#

If a visitor loads a page but never interacts with it (no click, tap, or keypress), there’s no INP value for that session. That session still contributes its LCP, CLS, and TTFB ratings — but doesn't drag INP down with a zero or a penalty. UX Rating is weighted toward metrics that are actually observed for each visit.

Where to find UX Rating#

Navigate to Site → Real User Monitoring → Audience to see UX Rating displayed as overall percentages of Good, Needs Improvement or Poor user experiences, as well as bar chart over time.

How to improve your UX Rating#

Since UX Rating is a composite of four metrics, improving any one of them will lift your overall rating.

Focus on the metrics where the largest share of ratings fall outside "good":

- Improve Largest Contentful Paint — optimise images, reduce server response times, eliminate render-blocking resources.

- Improve Cumulative Layout Shift — set explicit dimensions on images and embeds, avoid inserting content above existing content.

- Improve Interaction to Next Paint — reduce JavaScript execution time, break up long tasks, optimise event handlers.

- Improve Time to First Byte — use a CDN, optimise server-side processing, enable caching.

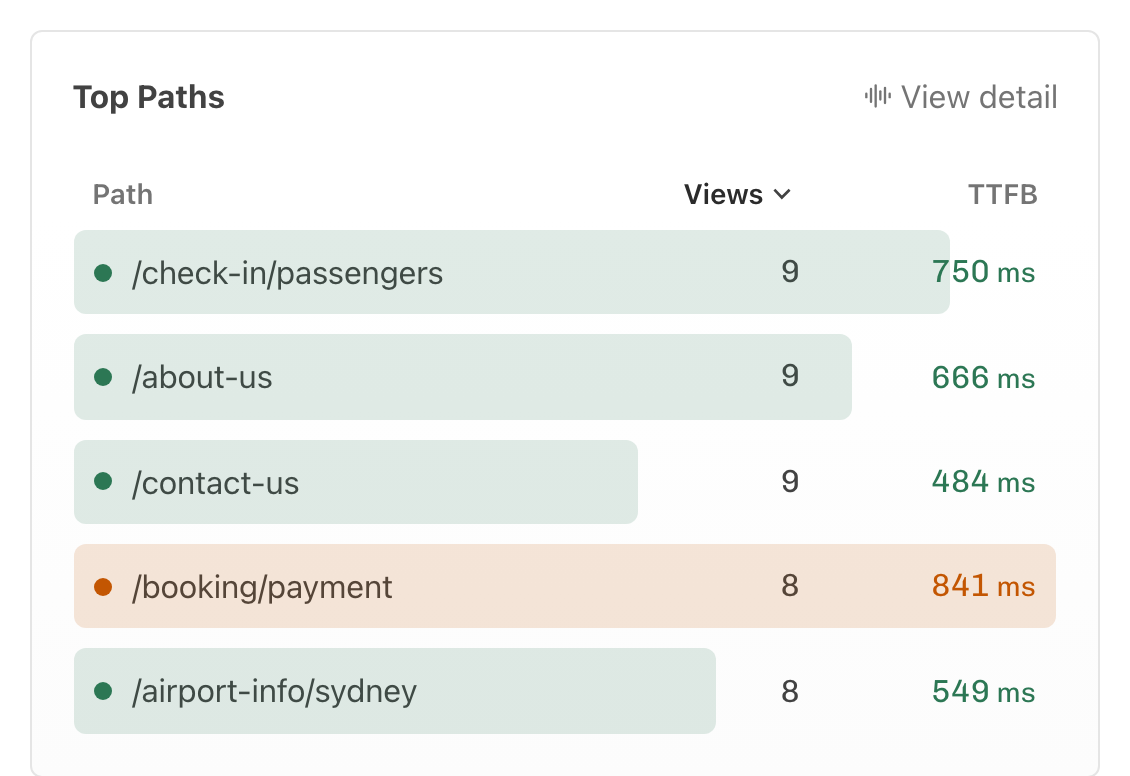

Use segmenting and filtering to locate Pages, Elements, or Page Groupings that have:

- The highest number of views (affecting more users)

- Poorly rated performance (indicated in red)

Using this approach you can locate the largest impact issues, and fix them, one at a time. By fixing the highest traffic, most glaring performance issues you will quickly improve poor experiences to good experiences. Your visitors will notice immediately.

Frequently asked questions#

Why Core Web Vitals and Time to First Byte?#

Core Web Vitals are the current gold standard set of performance metrics in the browser. They strongly correlate with user perception of both the speed and overall quality of a users experience.

Time to First Byte is a critical metric because it highlights a delay that users feel on every visit to your site. A fast TTFB keeps users engaged and means pages can initialise more quickly.

Combining these metrics using aggregation we’re able to quickly understand overall render speed, page stability and interactions.

Google’s Core Web Vitals assessment also partially overlaps with UX Rating in that your pages are evaluated for percentage of Good user experiences.